Internet Security – Part 2: How and Why do data breaches occur?

How and Why do security breaches occur?

Why do breaches occur?

Before looking at how security breaches occur it may be worth askingWHY they are allowed to occur.

If we return to our real-world example of earlier, securing a physical bank is well understood. You fit strong doors and vaults, complex locks, sophisticated alarms and have rigid procedures in place to control who has access to keys with tiered authorities to protect increasingly valuable assets. Such measures are proven to be effective against the“attack surface” presented by a physical bank – essentially, bad people must gain physical access to the bank and its vaults before harm occurs. Some rigorous record keeping and controls keep unauthorised staff away from customers’ personal storage vaults … etc. The security system works well.

Protections like these in the physical world have been in place and well understood for hundreds of years. The concept of keys and alarms and weighty doors is understood by everyone from the office cleaners up to the main board directors.

Turning to the virtual world and a couple of things above all become glaringly obvious.

- First, the attack surface (analogy: the number of doors and windows that may be accidentally left open for the bad guys to squeeze through) is very much larger (a factor I’ll return to).

- Second, the way the locks and alarms work are not understood at all by the majority of people who use or rely on them – all the way up to main board directors.

All this is another way of saying that, to most people, the subject of securing a public-facing IT system (bank application, shop, social media site …) on the Internet is frighteningly complex. So complex– they believe –that they simply wash their hands of the matter. Two responses can then occur:

- the organisation just ignores the situation, telling themselves “nobody will be interested in us” or,

- it hands the problem over to “IT security specialists”.

Response number (1) is nothing more than a dereliction of duty. The fact is that bad people are interested in even small targets (something as small as a home internet router or CCTV camera is of use to a hacker).

(The relatively tiny networks operated by Anadigi (parent organisation of biznik) – one public server cluster that provides our web sites, email, phone and other public-facing provision and the “home office” network that has to deal with everything from email and phone communications through media streaming to CCTV security – fend off several thousand attack attempts each day. If we didn’t use mechanisms that shut out all access to external rogues after their first few attempts at forcing their way in that number would be in the hundreds of thousands each day.)

Response number (2) is typical of medium to large organisations –the thinking being, hand the job to an external specialist, get a certificate saying everything is AOK and our backs are covered – end of problem. Should anything nasty happen, we can blame the external specialists.

Both responses are wrong in easily understood ways. But they share a common factor – MONEY! The simple fact is that the job of properly securing a publicly facing IT system is time-consuming, expensive and requires both rigorous implementation and operation of procedures – that must be monitored constantly.

Whether expense arises from the need for network monitoring and protection equipment, time spent learning and configuring the new system – or the cost of hiring external “specialists” it needs MONEY. The spending of (relative to any organisation size) significant sums of MONEY.

So, we come to the answer to WHY system security breaches occur. Owners prioritise money generation over money spent on making sure all the doors and windows are securely locked and everything is bolted down tight.

How much easier it is to just look at an IT system as if it were a physical bank. When you build a new one, put in the current “state-of-the-art” security and then set-and-forget while it goes about its work of generating cash for the business running it.

While the design and technology behind physical locks and alarms develops, it does so only slowly. A review every five years or so may be plenty good enough.

In IT circles, five years is the equivalent of several lifetimes. The list of technological threats that arise from software bugs, changes in security or other protocol standards is alone reason enough to require constant training, monitoring and updating of public-facing IT systems.

Companies and organisations pay scant attention to securing their customers’ personal data and possessions (or the personal data they have ‘acquired’ by tracking the user in order to build valuable profiles of the individual) for one simple reason. Until very recently it has been far, far cheaper to pay for a public relations whitewash than spend the time and money ensuring that the design of the IT systems deployed and the way they are operated and the staff that operate them are fit for purpose from a security viewpoint.

Stated simply, it’s cheaper to play security last than security first.

Hence, development staff are pressured to put new “features” in place as quickly as possible without anyone paying attention – let alone conducting oversight – on the security implications involved.

Hence we see Facebook saying “Oops – we didn’t mean that to happen” as time and time again some major breach of customer personal data occurs (eg; the way that Cambridge Analytica obtained personal data on 87 million Facebook users through use of a deceptive personality quiz – which took advantage of an interface Facebook had implemented to make it easier for external developers to create such games without thinking through the security implications).

How do breaches occur?

How many fingers and toes do you have? They’re not enough to countthe ways that security breaches occur. I’m going to concentrate on the three main ways that data “gets lost” from an IT system.Consider the list in order of likelihood.

- Obtaining the keys. Either persuading a user to hand over their keys (eg; a “phishing” attack) or using some other method that gets the keys to the safe.

- Bugs or flaws in software or hardware. I am constantly amazed at how many companies and organisations fail to apply security patches and other security related updates to their systems – eg; updating to a newer, stronger encryption method long after the one in use has been compromised or simply installing patches to recently discovered. security flaws – many of which are already being actively exploited by the time the corrective patch is issued.

- Poor system design. Some systems are so badly designed they may as well hang a sign on the front page that reads “Welcome – Come on in and help yourself”.

- Human error. From passwords written on post-it notes stuck all over the office through password sharing, clicking unknown links in scam emails to installing trojans and malware through various means, human error is one of – if not the – most widely and easily used means to gain unauthorised access to a system. Under this heading should be included the many examples of the biggest data breaches of all – when an IT person spins up a new cloud instance and copies an organisation’s entire database to it … forgetting to secure access to the new instance and database so leaving it open to any passing hacker to take a copy.

Obtaining the keys

In the late 1980s I was at lunch with the Chairman and IT Director of a major UK bank, alongside a colleague who specialised in IT systems security. The bank Chairman brought up the subject of the new IT security measures his company had recently installed and, while his IT Director beamed smugly, proudly announced that his bank’s systems were now “absolutely impenetrable” to outside attack. He claimed it was now impossible for “anyone” to gain access to the bank’s systems. My colleague listened – then offered a wager (a good bottle of wine to the winner) claiming that he could access the bank’s systems within a week.

After much scoffing and guffawing the Chairman said he’d take that bet.

Three days later my colleague hand-delivered an envelope containing the Chairman’s previous six months payslips, the minutes of the Bank’s last three Board meetings, a copy of the list of phone calls made from the Chairman’s office in the past week … and a couple of statements showing the transfer of £10 from the current account of the IT Director to the current account of the Chairman – which my colleague had instigated. Just to prove he now had access to anything he desired within the Bank’s systems.

What high-tech skulduggery had been used to gain such deep inside access?

My colleague had copied some cute pictures of cats on to a couple of dozen USB keys … as well as some simple key-logging software. He then walked round the square outside the Bank’s headquarters one morning scattering the keys on the ground. Human curiosity then took over. Bank workers leaving the building for lunch found the USB keys, took them back to work … and inserted them into their PCs and spent time looking at the cute kitty-pics. Meanwhile, the key-logger software installed itself in the background and, over the next two days, sent enough login IDs and passwords to allow my colleague to access the bank’s systems without breaking sweat.

He enjoyed a very nice bottle of wine. The IT director was (a little unfairly) sacked.

OK – that trick doesn’t work so well in 2018. Current generation PCs don’t automatically run software they find on a USB stick or CD and most corporates are wise enough to bolt down any USB ports to prevent files going in or out of the building that way. Employees can no longer waste time looking at cute kittens – but it’s also impossible to either silently install malware or (as TV shows still pretend) simply plug in a USB key and hoover up all the files on a secure system!

But … the point to learn is that the simplest and easiest way to compromise any security system is to exploit some human weakness or persuade a human to voluntarily hand over their keys without complaint. The modern simple equivalent is some variant on a “phishing” campaign – an email is sent to the target user appearing to come from a valid organisation, complete with logos, signatures, links to help pages etc, telling the reader that their… “account has been compromised and their credentials must be reset” / “mailbox is full and they must login to clear it”…

The smart, trained user knows enough to check the actual sender address and the URL of the link they are asked to click. But, send 1,000 emails and the hacker needs only 0.1% of the recipients to click the link and … SUCCESS! Access granted, keys obtained.

Everyone will be well aware of the technique when emails arrive supposedly from organisations with whom you have no relationship containing messages such as those above. Those emails are just sprayed out at random by the million … and they are successful. The response rate needs only be tiny for the hacker to gain access to bank accounts or systems. Phishing emails can be very sophisticated – even IT professionals have been caught out.

Walk around any office and you’ll still see keyboards and cubicles plastered with post-it notes displaying login ID/password reminders that any visitor can easily note or snap with their phone.

There are many variants of human exploit. Phone calls from “the IT Support Team” asking for login details work more often than not – and if that fails, telling someone that new company policy requires them to install desktop sharing software to enable team meetings and remote support usually gets the job done.

Next time you read about a few tens of million user records going missing understand that the most likely background story (that the embarrassed organisation will never admit) is that an employee GAVE the hacker access. Claims that some sophisticated hacking software or a hitherto unknown vulnerability had been used to obtain the data that is lost often hide the fact that some person simply screwed up.

Sometimes there are no keys! Unbelievable?A simple web search will reveal dozens of data losses caused by careless IT staff who (perhaps while maintaining a main system) simply opened another virtual machine instance on a cloud service, copied a backup to that machine … but forgot to password protect the temporary machine.

It doesn’t matter how secure the main system is if the operating staff are idiots who can’t follow basic safety protocols.

And, yes, there are people constantly scanning the Internet …probing for just such openings and looking to see whatever haul of treasure might be lying around just waiting to be picked up.

Easiest way to breach a computer system? Ask a human nicely … or just wait until they slip up.

Bugs or flaws in software or hardware

It’s true. All software contains bugs (flaws or mistakes) in the code. Even the most fundamental pieces of software in any system (say the network communications protocol) is subject to flaws – some of which lie undiscovered for years.

Most of these flaws go unnoticed and unexploited as they are fixed before news of their existence comes to light. So … as long as all machines in a system are kept up to date things are fine. Right?

Sadly no. Teams of researchers working worldwide constantly try to find bugs or new ways to exploit hardware and software. Where a workable exploit is found, the developer or manufacturer is informed and given time to correct the problem and distribute the resulting patch before the exploit details are published.

But sometimes, workable exploits are discovered by equally skilled and diligent bad hackers – and turned into malware that is then spread as fast as possible so as to infect the maximum number of devices before the flaw can be patched. These so-called “zero-day” exploits can affect any IT system connected to the Internet and are the basis of many “state sponsored” hacking exploits. Little can be done to defend against such flaws (apart from following general good system operating practice that denies access to everything other than the parts of the system that have to be exposed in order to deliver services) but, that said, I strongly suspect that the number of times “state actors” are blamed for some huge (and potentially embarrassing and costly) data loss are far greater than the number of times any state actor worth its salt doesn’t just take advantage ofa human exploit or a system that hasn’t been kept up to date.

Poor system design

The final category I want to discuss is poor system design. As a system designer of many decades standing I find this unforgivable.

I have already discussed the way that Facebook has allowed third parties almost unfettered access to its customers’ personal data –all without their knowledge or permission, to be used in ways that I suspect no customer would agree to. I could discuss Yahoo – or Google – or Ebay … or any of the hundreds of personal data holding companies whose lax security has led to the loss of personal “keys” and confidential information over the years.

Instead, I’d like to look at an example of how bad systems design married to appalling operating practice and the wilful ignorance of main board directors charged with looking after trivial matters like customer security pans out.

Let’s look at French bank Credit Agricole (“CA” @CreditAgricole). Users of its on-line banking service are asked to log in with

- their full 11-digit main account number as ID

- a self-chosen 6-digit password

So, what’s wrong? Compare this to a more typical on-line banking system where we might see:

- A customer ID (login ID) issued by the bank that bears no relationship to the customer’s account number or other information that might become publicly available. In other words, there is no way to convert or discern the account number from the customer ID or the customer ID from the account number. The customer ID is known only to the customer.

- A password usually comprising at least 8 characters that may be a mix of (again, at least) digits and letters. Again, this information is known only to the customer.

- A “memorable word” of arbitrary length and complexity. Once more, this is known only to the customer.

- At login, the bank asks for the full customer ID followed by a random selection of characters from the password (say, the 3rd, 6th and 9th characters) followed by another random selection of characters from the memorable word (eg; the 4th, 8th and 12th characters).

- NOTE: None of the login information is either public (eg; an account code) nor can it be derived or guessed at from publicly available information. Then, only a small part of the password and memorable word fields are requested – NEVER the full code strings – in this way, even if some bad actor manages to listen in to the (properly encrypted) data stream (or use key-logging, session replay or some other malware to watch) they never gain access to the full login details as each subsequent login attempt will ask for a different random combination of two separate secrets. Even if the encrypted communication line is broken, a criminal would have to monitor dozens or hundreds of logins to harvest the full password and memorable word data.

- The best use 2nd factor authentication (“2FA”) – a mechanism which requires a user to have a second, usually physical, means of identification before gaining access to an account. A simple example is where a web-site sends a one-time code to your mobile phone which you must then enter into the login screen … correct entry of this access code ‘proves’ you have access to the phone so acts as a secondary check that you are authorised to login. Better is a physical “key” (eg; a USB key pre-programmed with your secret, encrypted identity).

Now look at CA. Everybody who receives a cheque or direct debit instruction or just an electronic payment from a CA customer knows the customer’s account number – therefore immediately knows their on-line login ID. That’s the first half of the problem out of the way then. We are already 50% of the way towards gaining full access to a CA customer’s bank accounts. And, all we’ve done is read something the target customer sent us!

Second, at each login, the full 6 digits of the password must be entered. That’s all 6 digits in the order they were chosen originally. So, if a user chooses “123456” as their secret password then they must enter “123456” every time they want to login.

The bank does not allow letters, punctuation or anything other than the digits 0-9 in the password.

But CA offers no 2FA and requires that all 6 digits of the passwordare entered on each and every login.

So, the only thing standing between a criminal and a CA customer’s bank accounts is a 6-digit code.

6 digits provide 1 million possible combinations so somebody has obviously decided that correctly guessing the customer’s code is all but impossible. WRONG. Even if a computer program had to try 1,000,000 password combinations it would be the work of a second to attempt them all. I truly hope CA at least locks account access after a given number of failed login attempts – but even if it does as soon as access is restored the hacking program will be back continuing with its attempts. Eventually it will win.

Ignoring the fact that many customers will simply choose something easily memorable – their or a close relative’s birthday (eg; “110263”) which is a number easily obtained from public records (or any of hundreds of prior data breaches) and probably brings the number of attempts necessary to crack many accounts down from 1,000,000 to … just 2~3!

So, if I’m a hacker and prepared to do a little research (eg; look up the target customer’s Facebook profile) I need only receive a cheque or payment from the customer to access their bank accounts within (say) 3 attempts. Of course, as a hacker, I probably already have the Equifax sourced data dump (or several of the dozens of similar personal data sets “leaked” by companies worldwide) so I may not even have to visit Facebook to find my target’s birthday or family data – I just need to look them up in the data I already have.

Being as polite as I can be, the CA on-line authentication system is not covered by my definition of adequate security.

What else could possibly go wrong?

Modern browser technology for one. Many modern browsers are configured ‘out-of-the-box’ to offer the convenience of remembering data entered into a web form. Firefox (possibly the most secure mainstream browser currently available) will happily remember and fill in the 11-digit account code field of CA’s login screen. Use a shared computer or login – as many people do at work or at home and it’s not even necessary to receive a cheque or some other transaction to learn the target customer’s login ID – just let the browser fill in the field for you.

Then there is technology currently much beloved of web marketing departments – so much that it has even been seen deployed in the wild by banks – a technology called “session replay”. This is delivered silently as an obfuscated script (ie; a piece of code scrambled so that no normal user would possibly be able to guess what is happening and even professionals have a hard time unscrambling it) embedded in a web page. Working unseen in the background a session replay script can capture every key-stroke, mouse movement and click – including a full ‘live’ screen image that is sent back to a third party’s server allowing anyone with access to that server (eg; a bank employee or an employee of the company providing the session replay recording script … or anybody that has access to the server the data is transmitted to) to replay the user login session – revealing the full 6-digit “secret” code – that only the customer is supposed to know, not even to be revealed to bank staff.

It gets worse. A CA customer calling the bank telephone line is greeted with a recorded message …asking them to key in using the phone’s keypad the full 11-digit account number AND the full 6-digit “secret” access code!!! The SAME login credentials used to access the on-line banking system.

Modern phones are full of user conveniences. Such as “Last Number Redial”. So imagine, somebody calling CA from an office phone during their break. They key in their full credentials and in return get greeted by name. What a wonderful service. They finish their banking and go back to work. Along comes someone else … who hits “Last Number Redial” and, on many office phones, they will see the full CA telephone number followed by 17 digits of “secret” login credentials – the exact same key used to access the customer’s accounts on-line. Our criminal doesn’t even have to risk talking to a bank staff member (who may recognise the different voice) – they simply move to a computer, load up the CA website, hit login and enter the codes. Voila! One set of bank accounts hijacked.

Even a phone that doesn’t remember digits keyed in during a call (so will not play them back later) usually still displays them as they are keyed. Busy workplace, customer keys in their “secret” code while somebody stands behind with a camera phone ready to snap or video the phone display. Again, bank accounts hijacked.

And let’s not forget that most company-operated telephone switches (what used to be called a “PBX” but is these days just another computer routing traffic between phones) record all key presses for audit purposes. So … there’s another potential pool of happy CA account breakers – anyone with access to the company phone server log files or call records.

Having raised this security issue in writing with directors of Credit Agricole over three years ago – including a full, keystroke-by-keystroke description of the many ways the bank’s on-line security could be easily breached (there are several others I could trivially think of) I received a response that the bank takes customer security very seriously and is entirely happy with its anti-fraud measures.

My mind boggles.

Just to add to the fun, the bank relies on HTTPS (the supposedly secure and encrypted web page communication protocol) to ‘ensure that customer communications are secure’.

Problem. CA continued to use a web signing certificate (the mechanism that underpins the entire encryption between user and bank) based on an SHA-1 encryption standard certificate until 2017 – just before all major web browsers refused to connect to websites still using SHA-1encryption.

The reason the browser companies decided to refuse SHA-1certificates:

It was first shown as a proof-of-concept as long ago as 2005 that SHA-1 encryption could be broken and Google then released in 2010 a definitive proof and published working program code showing that the algorithm was broken leading to a ban on use of SHA-1 certificates by US federal agencies since 2010 followed by a recommendation that any organisation still using SHA-1 should update their certificates immediately and, finally, an outright ban on the issue or renewal of SHA-1 based certificates in January 2016.

So that’s just 12 years that CA exposed its customers to insecure communications, just 7 years since anybody with sufficient computing resource available could use Google’s published program code to impersonate CA’s websites and over a full year after the standard was outlawed from the web.

But the bank remained entirely content with the situation – until confronted with the reality that their web-site would effectively disappear from the web in January 2017 as no browser would talk to it – because on a very fundamental technical level the site could not be trusted.

Generic design flaws

In all cases data breaches and personal data loss could easily be mitigated.

To take a simple example – the system needs to retain credit card or other confidential payment details. This may be necessary either because the credit card payments will not be taken until the goods are shipped at some later point in time and there may be legal and accounting regulations that require payment details to be kept.

Fine– there’s little that can be argued against a legal requirement to retain even sensitive data.

However … the way in which that sensitive data is stored makes an enormous difference. A little secret – encrypting a piece of data (credit card details, phone number, social security ID – even your name, address and phone number) requires less than one line of program code. In plain text the code changes from this:

PUT “credit card” in database

to

PUT encrypt(“credit card”) in database

where “encrypt” is a program function that performs the actual work of encrypting the data – in this example a credit card number. The“encrypt” function itself can use any of numerous, modern, secure methods to scramble the data. The best use a secret key – known only to the organisation processing and storing the data (er, just like our real-world bank having a key that opens the door to the bank) that is stored well away from anywhere Internet accessible.

So, a simple change can safeguard personal data. To the effect that even if some idiot leaves a backup of a couple of billion customer records on an unprotected server exposed to the Internet crooks and spies are welcome to come take a copy – and, unless they also obtained the secret key, spend the next few million years trying to unscramble just one customer record.

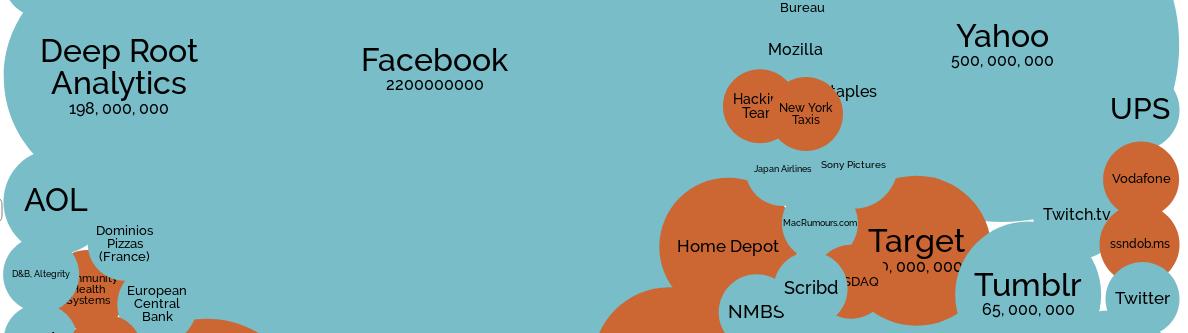

Given that the process of safeguarding personal data through use of encryption is so trivially easy we need to ask why companies that expect us to trust them with our data don’t do it (click on a few of the blobs on the informationis beautiful chart to see how many times personal data has been stored in clear, plain text form – ie; not encrypted at all).

The answer once again comes back to MONEY. In simple terms, encryption uses processing resource. If a company encrypts large amounts of data that requires it to use –hence pay for – bigger or more computer hardware. So, it would cost – in relation to the turnover and profit of any arbitrary organisation processing personal data – a tiny bit more to ultimately safeguard that data.

That is how much regard and respect the companies revealed on the informationis beautiful chart– and the thousands more that aren’t (yet!) on the chart – show to you and your most personal and confidential information.

Your custom and your data is worth billions of £/$/€ to them but while the cost of putting a trifling few billion people at risk of fraud and worse remains less than the cost of saying “Oops – sorry – my bad!” they will continue to run their IT systems with the minimum amount of hardware and pocket the savings that data encryption would cost.